How Figma, Midjourney, Databricks, and Modyfi Harness AI with Design

These days, it seems like everyone has thoughts about the future of AI. We’ve heard numerous predictions, ranging from AI taking over various jobs and aiding in the creation of healthier snacks, to potentially replacing our pet cats and dogs with robotic companions.

But for those of us who are actually involved with building and designing the next iteration of AI and AI-integrated products, the future looks very different. While a lot still remains to be determined, we’re starting to get a sense of where this powerful technology could take us and what that will mean for a wide range of industries. Whether you’re a founder building an AI product or a designer interested in AI, it’s helpful to understand what the people actually working on the ground think.

To unearth new insights, we recently held a series of live panels with Foundation Capital and NEA, where we spoke to folks who are currently building AI tools, products, and platforms. Led by Steve Vassallo, General Partner at Foundation Capital, Vanessa Larco, Partner at NEA, and Enrique Allen, Co-founder of Designer Fund, we heard from:

- Noah Levin, Vice President of Product Design at Figma

- Nadim Hossain, Vice President of Product Management at Databricks

- Greg Hochmuth, Senior Engineer at Midjourney who focuses on new interfaces for mobile, web, and beyond

- Joe Burfitt, Founder & CEO of AI design platform Modyfi

Over the course of the discussions, they shared insights on AI that are ahead of the curve. Keep reading to learn where the experts think AI is headed—and what kind of a role design will play in it.

Key Takeaways:

- AI will raise the ceiling and lower the floor for design

- AI will replace component-based design work so that designers can focus on end-to-end flows

- AI design will emerge as an critical role within design teams

- There's a major opportunity to develop ML models specifically for UI/UX design

- Chat isn’t dead—it’s just getting started

- AI should help users figure out what they really want

- AI is powerful in multiplayer mode

- How you create and communicate your guardrails is a life-or-death situation

- The future is unwritten, so keep experimenting

There's so much development happening on the engineering side regarding AI, so much amazing progress. And yet, there’s so much more to do in design, specifically, that isn’t talked about enough.Noah Levin, VP of Product Design, Figma

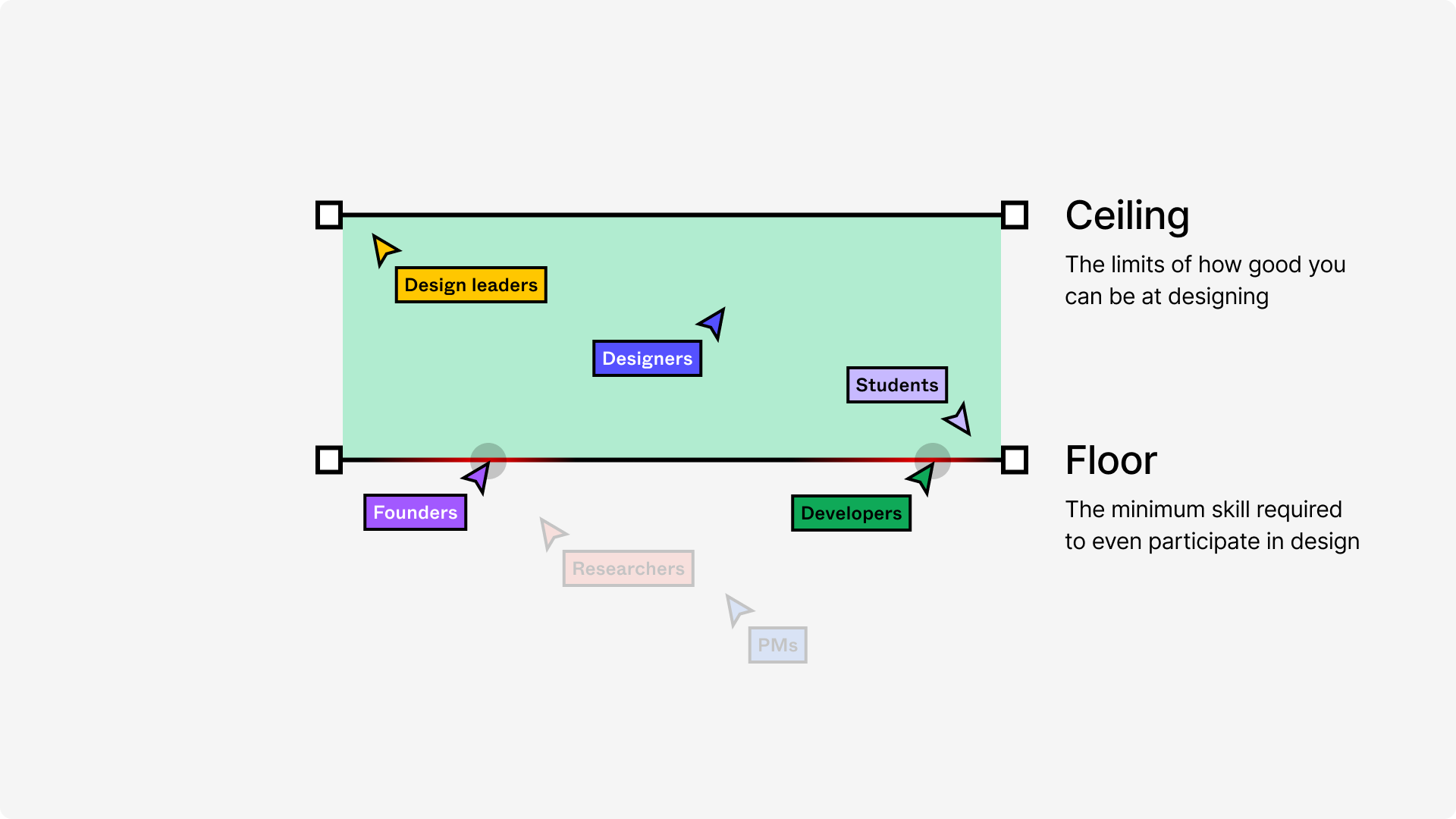

1. AI will raise the ceiling and lower the floor for designers

While many of the news headlines want you to believe that AI will either give us superpowers or take away all of our jobs, the truth is actually probably somewhere in between. “The idea isn’t to replace designers, or even to help them make the same things they made before,” Joe clarifies. “AI is allowing designers to be creative in ways they weren’t able to before.”

Another way of looking at this is to examine how AI will expand our capabilities depending on our existing skillsets. “One frame that we've been using for AI is the idea of lowering the floor and raising the ceiling,” shares Noah. “The idea is when you lower the floor to something, you're making it more accessible, which has actually been Figma’s mission in general—to make the design more accessible.”

AI has the potential to lower barriers for entry, enabling anyone to create and prototype new designs. On the flip side, these same tools can raise the ceiling by enhancing the capabilities of professionals and improving their efficiency. For example, AI can accelerate and simplify design by visualizing concepts, streamlining the design process, and allowing for better decision-making.

If you remember just one takeaway about AI in context with design, let it be this—that AI will both raise the ceiling and lower the floor for designers. This was just one of the insights we highlighted in our roundup of The Top 10 Talks on the Future of AI and Design From 2023 and that Steve highlighted in his Forbes article recapping the discussion, Why Generative AI Needs Design.

2. AI will replace component-based design work so that designers can focus on end-to-end flows

Today, designers use a variety of manual components to do their work—individually selecting tools to draw shapes or create buttons, and pulling from sticker–sheet-like component libraries. Noah and Joe believe that ultimately AI will make all of this pixel-pushing obsolete.

We nudge way too much. I don't want to know how often we’re spending time as designers just making things aligned. The computer should fix that for us, right? It should make it easier to align things.Noah Levin, VP of Product Design, Figma

Joe sees AI as an opportunity to focus on the best parts of design, leaving the routine parts for a machine to handle. He explains: “There are moments in every creative workflow where you can feel your soul leaving your body as you perform the same rote task for the umpteenth time that week. These are the parts of the creative process where AI has the capacity to restore, not encroach, upon human creativity. With AI as creative co-pilot, designers can easily delegate the most arduous parts of their day, and stay in a creative flow.”

AI-enabled design tools can also facilitate end-to-end product experiences, freeing design teams to focus on the bigger picture. “As a leader, I’m constantly reminding myself as well as the team: What’s the context of this moment? What’s the whole journey? But when our tools are so hard to use, or we’re fighting against them, we can’t even think about the strategy because we’re spending too many hours just making the thing real,” Noah explains.

3. AI design will emerge as an critical role within design teams

As AI becomes a part of more products, it will be more important than ever to think about how AI shows up across within your user experience. A skilled AI designer and content designer can help with establishing foundations, rules, and processes to help others create with AI.

As Noah explains, “We had a systems problem with how we represented AI. One person used a sparkle icon and thought of it as a tool. One person was like, ‘No, it’s an assistant and it needs to be a chat box.’ And one person was like, ‘No, it’s actually an environment.’ These were all independently valid answers, but you still need to pick one at some point. We’re making progress on that.”

As a founder, there are also tradeoffs to consider between making tech user-friendly and relatable to non-technical users by considering their everyday experiences, versus building for Silicon Valley expectations and lingo. How do you make your products really helpful and approachable for the majority of people that are going to use them?

The smartest person you know is kind of annoying. You're not gonna listen to them if they don’t deliver their message well, right? And we all know people like that. So if the AI is all-knowing but delivers the message poorly or in a way that’s not acceptable, then it’s not going to work.Nadim Hossain, VP of Product Management, Databricks

4. There's a major opportunity to develop ML models specifically for UI/UX design

Large Language Models (LMMs) are great for helping with brainstorming and idea generation (think ChatGPT), but they’re not great generating usable layouts. Today, it makes sense that companies are building off what’s available—but designers will ultimately need more.

As Noah explains, “It makes sense to offer things that these AI models are good at. But when you move on from brainstorming to designing, you’re dealing with a different framework. Large language models are a little bit rigid. They’re not good at abstract positioning. We have to figure out what models do we use, what combinations of them make sense for something so visual.”

In the future, AI will enable designers to create combinations more efficiently—and more important, designers will be able to train the AI behind their design systems.

“Imagine training on your company's design system enough that you can just say, ‘We’re trying to solve this problem, and this is a modal that might be helpful here.’ And instead of literally having to grab each asset one at a time, you should be able to work in patterns. You should be able to put together combinations of things that let you focus on the flows and the really, really important moments,” Noah shares.

One problem we face is there’s so much possibility. What’s the solution for making AI amazing for designers? I don’t know that we know that yet.Noah Levin, VP of Product Design, Figma

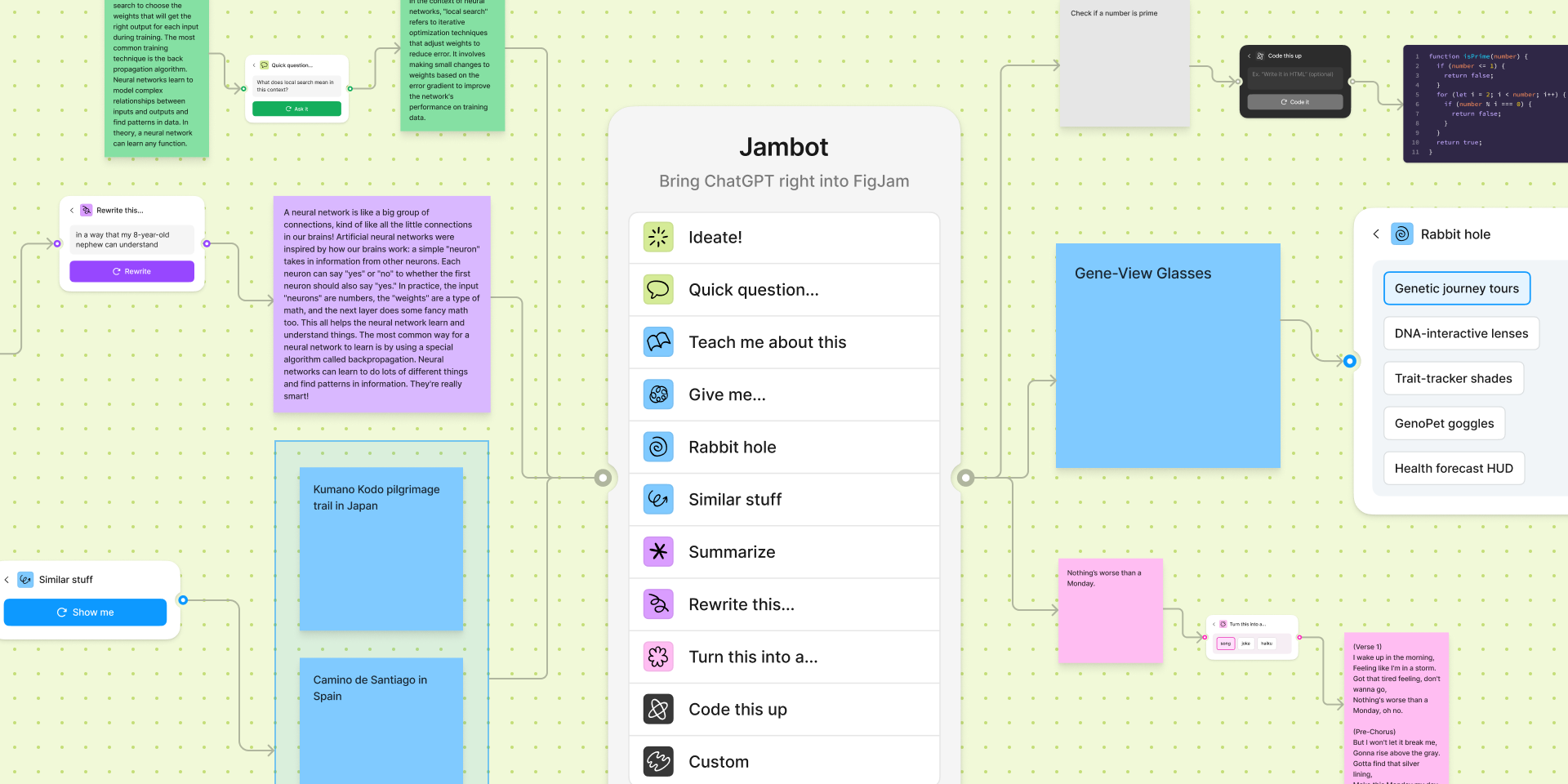

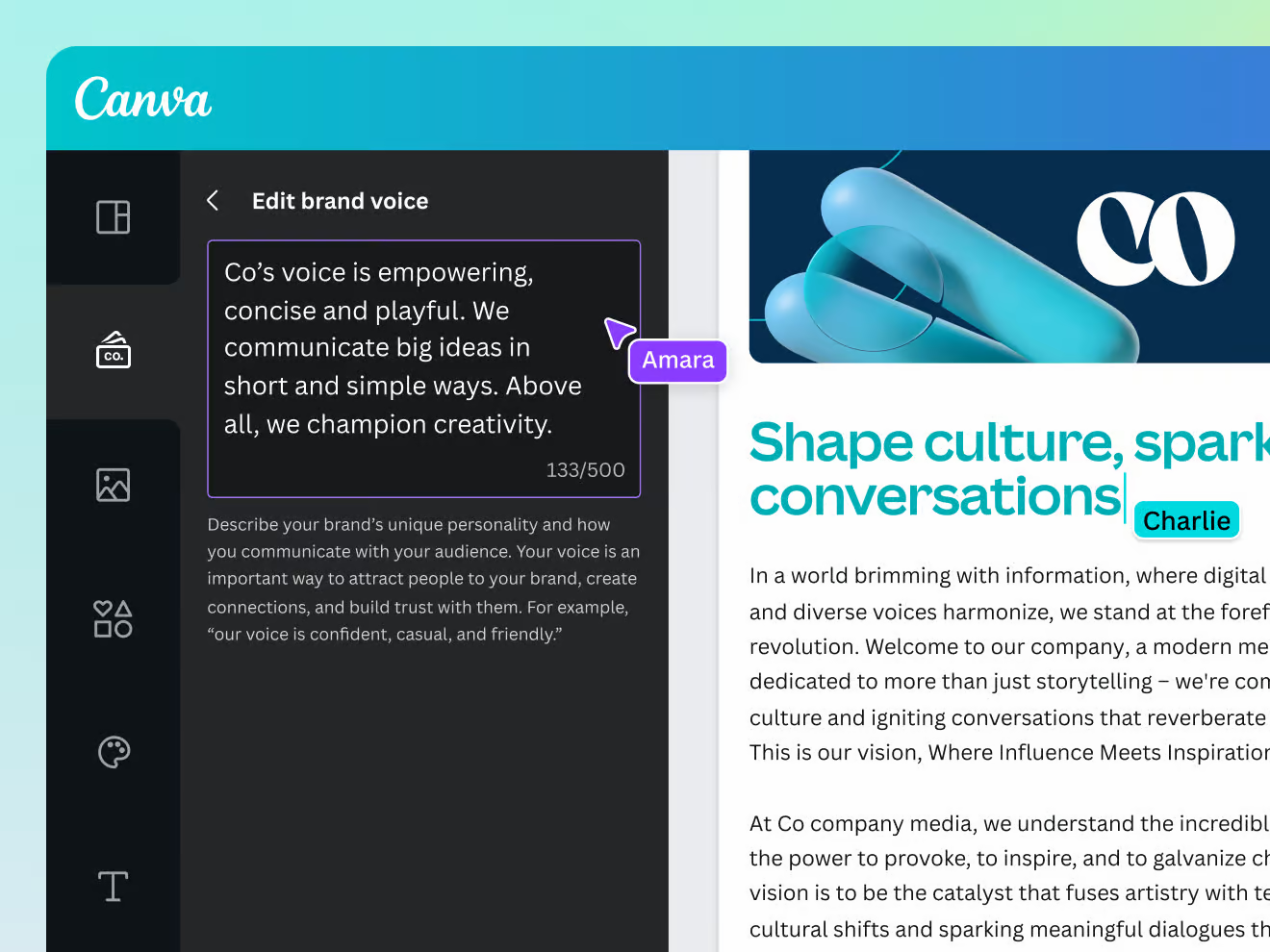

5. Chat isn’t dead—it’s just getting started

Where do thought leaders stand on chat? Noah believes in the potential for chatbots down the road. While he agrees with Amelia Wattenberger’s argument that chatbots are not the future of interfaces and we should explore alternatives, they’re also incredibly intuitive, easy to use, and provide a feedback loop for AI to iterate on and improve over time. They can also be used with in-context AI features, like summarization or with Canva's Brand Voice feature.

“It’s a very intuitive thing to learn how to talk to something. We’ve been using Google for a very long time to type in basic queries and requests. We’ve been talking to people as a civilization for way longer than that, using a telephone, texting people. It’s a familiar, intuitive interface,” Noah explains.

Greg agrees that we haven’t found the right format for input. He shares, “The right interface doesn’t exist yet. Text and text prompting was the easy one. It was the immediate one because that’s how the models were trained.”

🔎 In our AI x Design survey, we asked: If we zoom forward three years, is the most common starting point for the web a Google search bar or a new AI-powered chat interface like ChatGPT?

30% of respondents chose “Chat interface,” another 30% chose “Search bar,” and one person responded, “They will be the same thing,” another responded “3D,” while another shared “I hope that the there will be a more proactive interface that tells me what I should or want to know without having to ask for it.”

6. AI should help users figure out what they really want

For Greg, the main issue with text-based inputs like chat is that often, people don’t know what they want. "And if they don’t know what they want exactly, they have a hard time putting into words or even knowing which words to tell the machine, or how to modify what they get from the machine,” he explains.

This will become a key focus for product developers and designers to figure out—how to onboard and train users so that they have the necessary skills to get what they want from AI.

Midjourney does a good job of this by having image generation requests be publicly visible through Discord, helping people learn from other people instantly. Seeing what other users have accomplished can be a powerful motivator. Think about how this has worked with previous platforms and technologies. For example, early Instagram helped inspire users by recommending photos and creators.

AI does its best to fulfill a user’s exact wishes, but it falls short in helping them discover and understand their true desires. Ultimately, AI—and how it’s introduced, distributed, and has community created around it—should play a role in guiding users to figure out what they really want.

7. AI is powerful in multiplayer mode

When groundbreaking technology emerges, people naturally want to come together to process and explore its possibilities. AI should be designed with a default mindset of fostering collaboration and community. While ChatGPT offers a fascinating solo experience, there's even more potential in leveraging AI as a tool for collective exploration and interaction.

As Greg puts it: “I think AI wants to be designed as multiplayer. Whenever there’s a new technology in the world, people want to be around each other for it and they want to come together to experience it and be like, ‘Are you seeing what I'm seeing?’”

Joe takes this one step further, imagining a future where AI is our partner in that creative process, not just a result. He shares, “Today’s SaaS applications are all about being multiplayer. Currently that means human to human, but we can add AI into that multiplayer modality and start interacting with it in new and interesting ways.”

I think ‘time to trust’ is important to quantify. If you’re dealing with an AI, what would it take you to trust it? I think that’s going to be important with AI interfaces.Nadim Hossain, VP of Product Management, Databricks

8. How you create and communicate your guardrails is a life-or-death situation

Don’t hide to users that they’re interacting with a machine, not a human. AI is just a tool, a bot, without its own intentions or emotions. As stunning as Midjourney may be, Greg explains, it still just provides answers to our questions. It’s not anthropomorphized and doesn’t get “cute” with users.

Build in your own guardrails for safety. “How is ChatGPT like a Stanford MBA? It's confident and wrong,” jokes Nadim. “As product folks, we have to add the guardrails in judgment.”

Self-driving car companies have come a long way in optimizing human safety, but there are still risks that come with relying on AI. Product teams have a responsibility to establish guardrails to prevent AI issues, adverse actions, and misunderstandings—as well as to warn users when AI is unsure or unable to perform accurately.

You may need to develop your own safety guidelines and principles to ensure users are protected—government agencies are unlikely to keep pace with the advance in technology.

🔎 In our AI x Design survey, we asked: Who should be most responsible for driving AI safety and mitigating its adverse risks and effects? Should it be governments, companies, or the users themselves?

Responses were split evenly between governments; companies; and a combination of governments and companies. One person responded, “I think companies should be, but I don't think that companies will be responsible enough.”

9. The future is unwritten, so keep experimenting

For all the predictions out there, the future of AI is still to be determined. There are still so many unknowns, and all of the experts we spoke with urged experimentation. Likely, in a year's time, there will be clearer practices, examples, and success stories of AI integration.

Joe urges designers to get familiar with tools today to improve their opportunities tomorrow. He explains, “Designers should build AI literacy now to understand its limitations and opportunities. It will only become a bigger part of creatives day-to-day over time, but much of the commentary around AI is couched in extreme, conflicting declarations.”

How can you start building your own AI literacy? The best way to learn is through trying out new things. As Nadim puts it: “AI is one ingredient, and it's like saying, ‘Hey, we invented salt.’ Okay, great. How’s that gonna change cooking? You have to start using salt as an ingredient to find out.”

Designers should build AI literacy now to understand its limitations and opportunities. It will only become a bigger part of creatives day-to-day over time, but much of the commentary around AI is couched in extreme, conflicting declarations.Joe Burfitt, Co-founder of Modyfi